Category Transformative Technology

A systems and multidisciplinary approach to deepening our understanding and harnessing the power of disruptive technologies

AllArts, Culture & CreativityClimate, Environment & SustainabilityDemocracy, Justice & GovernanceFuture of WorkHealth & WellbeingResilient, Inclusive CommunitiesTransformative Technology

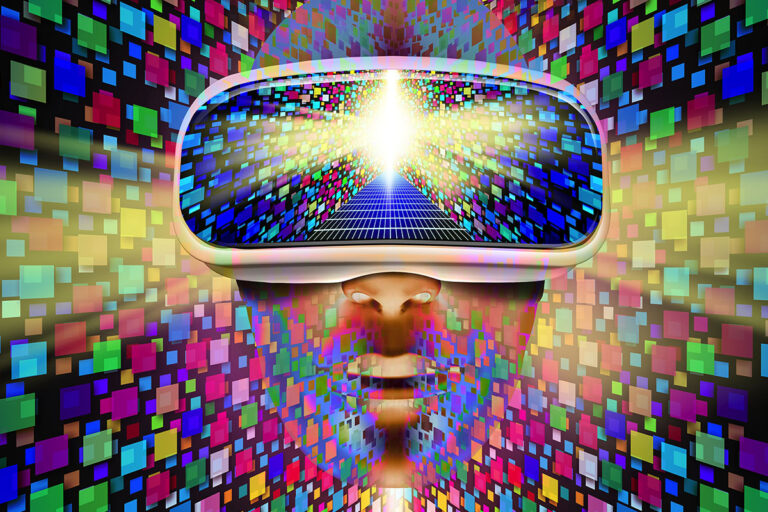

Apple’s new Vision Pro mixed-reality headset could bring the metaverse back to life

Smart wearables that measure sweat provide continuous glucose monitoring

Gen Z goes retro: Why the younger generation is ditching smartphones for ‘dumb phones’

ChatGPT’s greatest achievement might just be its ability to trick us into thinking that it’s honest

The next phase of the internet is coming: Here’s what you need to know about Web3

ChatGPT could be a game-changer for marketers, but it won’t replace humans any time soon

Canada needs to consider the user experience of migrants when designing programs that impact them

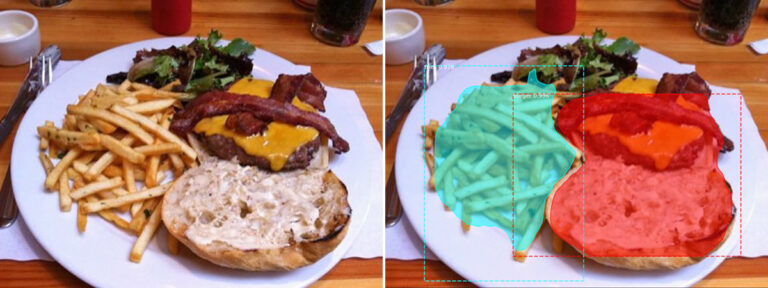

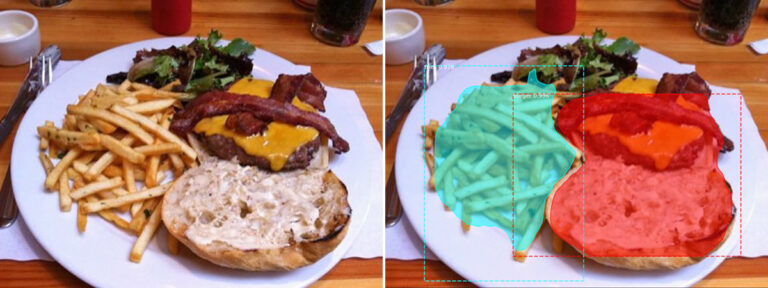

The metaverse offers challenges and possibilities for the future of the retail industry

Elon Musk’s Twitter Blue fiasco: Governments need to better regulate how companies use trademarks

What is the metaverse, and what can we do there?